Professional Web System 936932741 for Performance

The professional web system 936932741 prioritizes performance through a data-driven, modular architecture. It aligns resource allocation with latency budgets, employs real-time analytics, and favors independent services with clear ownership to improve fault tolerance. Dashboards reveal hotspots, while benchmarks and cost–benefit analyses guide disciplined iteration. Caching, asynchrony, and scalable design are treated as core patterns. The approach promises measurable speed gains, but trade-offs and long-term implications warrant careful examination before proceeding.

Why a Performance-First Web System Matters

In modern web ecosystems, performance serves as a fundamental determinant of user satisfaction, engagement, and overall conversion. A performance-first posture aligns technical decisions with measurable outcomes, supported by data-driven monitoring and experimentation. Latency budgeting and throughput tuning provide concrete levers, clarifying trade-offs and guiding resource allocation. This disciplined approach promotes reliability, scalability, and freedom to innovate without compromising performance.

Core Architecture for High-Speed Reliability

A robust core architecture for high-speed reliability integrates modular, horizontally scalable components designed to minimize latency and maximize fault tolerance.

The design emphasizes modular interfaces, independent services, and clear ownership boundaries.

Scaling strategies are evaluated against defined latency budgets, guiding capacity planning and failover tests.

Data-driven metrics inform optimization cycles, ensuring predictable performance while preserving freedom to iterate and evolve the system.

Real-Time Analytics to Drive Live Optimizations

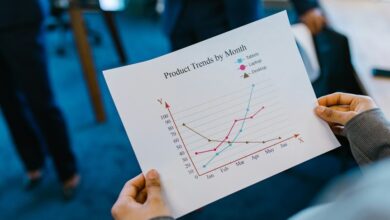

Real-time analytics underpin live optimizations by providing continuous visibility into system behavior, performance hotspots, and user interactions.

The approach emphasizes disciplined data collection, verifiable metrics, and actionable insights drawn from real time dashboards.

Latency budgeting emerges as a governance mechanism, aligning service-level expectations with instrumentation outputs while enabling rapid, autonomous adjustments that preserve user experience and system stability under varying workloads.

Practical Patterns: Caching, Asynchrony, and Scaling

Could caching, asynchrony, and scaling collectively reshape system throughput and resilience by decoupling components, smoothing latency, and enabling elastic resource allocation? Practitioners quantify effects via latency budgeting and load models, guiding cache invalidation policies and asynchronous pipelines. Measured patterns emphasize predictable ceilings, reduced tail latency, and resilient failover, with concrete benchmarks, cost–benefit analyses, and disciplined, freedom-minded iteration across service boundaries.

Conclusion

In a disciplined, data-driven trajectory, the Performance-First Web System aligns architectural discipline with measurable outcomes. Independent services, latency budgets, and real-time dashboards enable precise fault tolerance and predictable response times. Practical patterns—caching, asynchrony, and scalable design—are implemented only after rigorous benchmarking and cost–benefit analyses. The result is a resilient platform where performance initiatives are tracked, validated, and continuously optimized, like a well-tuned orchestra, each instrument contributing to a flawless, high-speed performance.